Welcome to 2025’s edition of “term so overused to mean so many different things that it starts to lose any real meaning in conversation because everyone is confidently using it to refer to different things.”

欢迎来到2025年版的“这个词被过度使用以至于在对话中开始失去任何真正意义,因为每个人都自信地用它来指代不同的事物。”

If you are a builder trying to build agentic solutions, this article might not be for you. This article is for those who have been in a meeting, a board room, or a conversation, with someone talking about AI agents and are either (a) not really sure what an agent is and how it is different from the generative AI capabilities we’ve seen so far, (b) not really sure the person using the term knows what an agent is, or (c) might have thought that they knew what an agent is until reading the first sentence of this article.

如果你是一名试图构建自主解决方案的开发者,这篇文章可能不适合你。这篇文章是为那些曾经在会议、董事会或对话中,与某人讨论人工智能代理的人准备的,他们要么 (a) 不太确定代理是什么,以及它与我们迄今看到的生成性人工智能能力有何不同,要么 (b) 不太确定使用这个术语的人是否知道代理是什么,或者 (c) 可能在阅读这篇文章的第一句话之前认为自己知道代理是什么。

While we will reference Windsurf in this article to make some theoretical concepts more tractable, this is not a sales pitch.

虽然我们将在本文中引用Windsurf以使一些理论概念更易于理解,但这并不是一个销售宣传。

Let’s get started.

让我们开始吧。

非常基本的核心思想

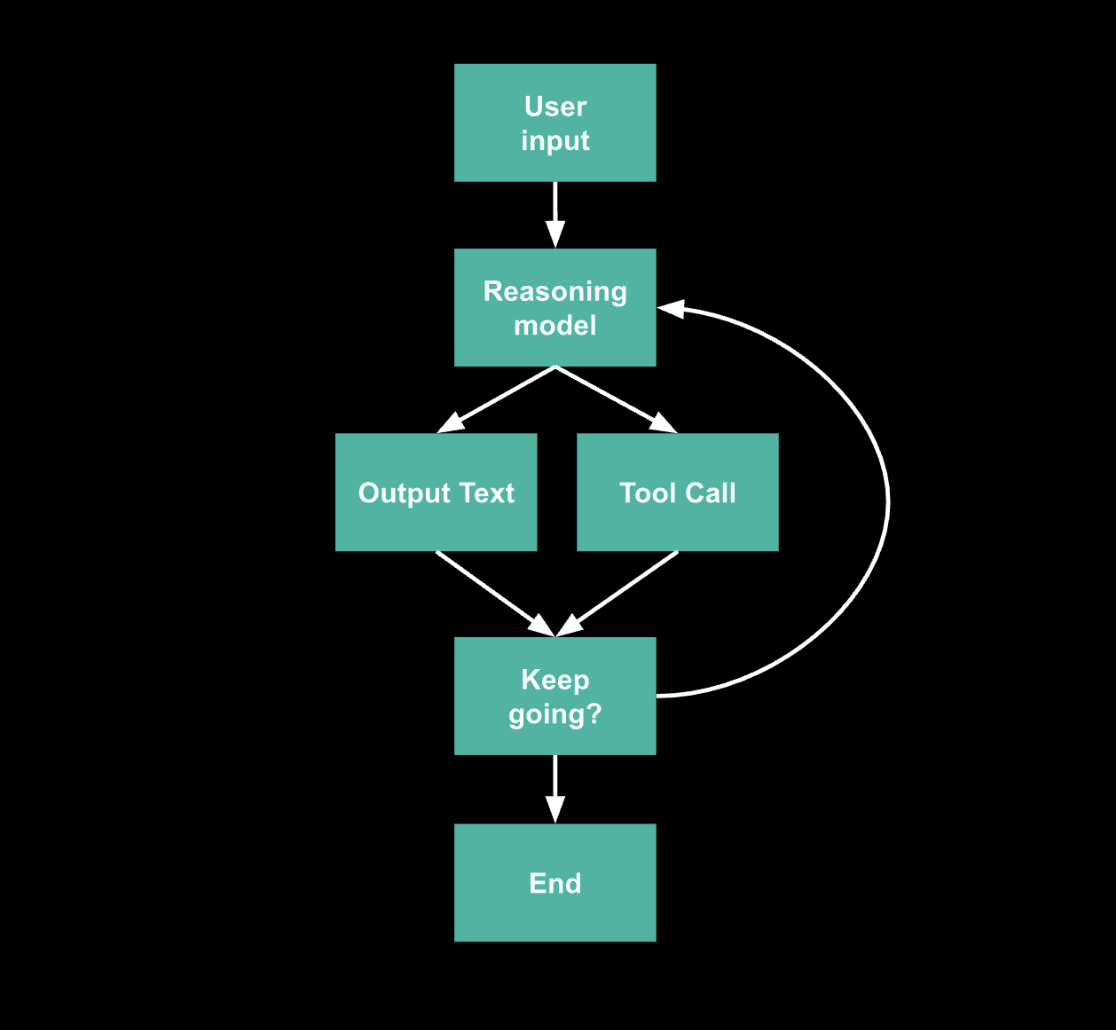

To answer the title of this article, an agentic AI system can simply be thought of as a system that takes a user input and then performs alternating calls to:

要回答本文的标题,代理式人工智能系统可以简单地被视为一个接收用户输入并进行交替调用的系统:

An LLM (we call this the “reasoning model”) to decide what action to take given the input, potential additional automatically retrieved context, and the accumulating conversation. The reasoning model will output (a) text that reasons through what the next action should be and (b) structured information to specify the action (which action, value for input parameters of the action, etc). The output “action” could also be that there are no actions left to be taken.

一个大型语言模型(我们称之为“推理模型”)用于根据输入、潜在的自动检索上下文以及不断累积的对话来决定采取什么行动。推理模型将输出(a)推理出下一步应该采取的行动的文本,以及(b)结构化信息以指定该行动(哪个行动、行动输入参数的值等)。输出的“行动”也可能是没有剩余的行动可供采取。

Tools, which don’t have to have anything to do with an LLM, which can execute the various actions (as specified by the reasoning model) to generate results that will be incorporated into the information for the next invocation of the reasoning model. The reasoning model is essentially being prompted to choose amongst the set of tools and actions that the system has access to.

工具不必与大型语言模型(LLM)有关,可以执行各种操作(如推理模型所指定)以生成结果,这些结果将被纳入下次调用推理模型的信息中。推理模型本质上被提示在系统可访问的工具和操作集合中进行选择。

This creates a basic agentic loop:

这创建了一个基本的代理循环:

Really, that’s it. There’s different flavors of an agentic system given how this agent loop is exposed to a user, and we’ll get into that later, but you are already most of the way there if you understand the concept that these LLMs are used not as a pure content generator (a la ChatGPT) but more as a tool-choice reasoning component.

真的,就是这样。根据这个代理循环如何向用户展示,代理系统有不同的风格,我们稍后会详细讨论,但如果你理解这些大型语言模型(LLM)不是作为纯内容生成器(类似于ChatGPT)而是更像是一个工具选择推理组件的概念,那么你已经走了大半的路。